DeepSpeed Compression: A composable library for extreme

By A Mystery Man Writer

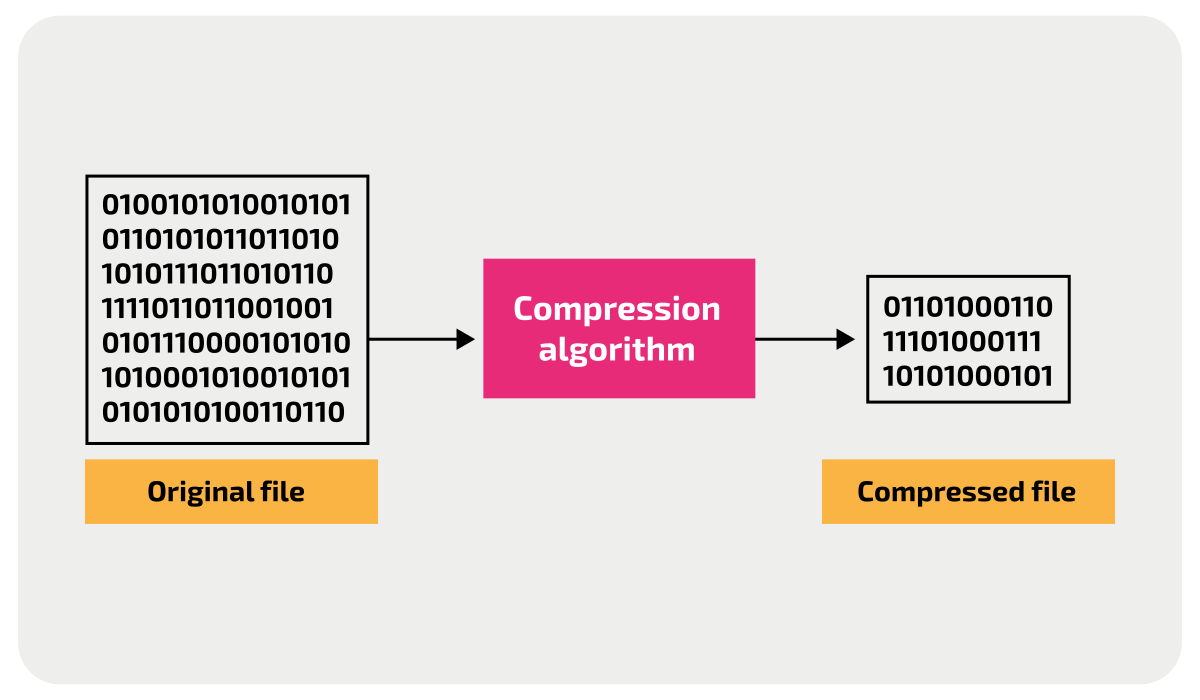

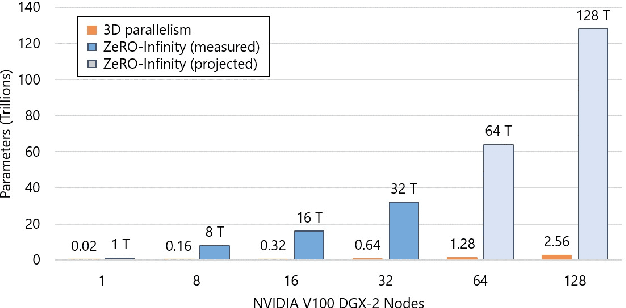

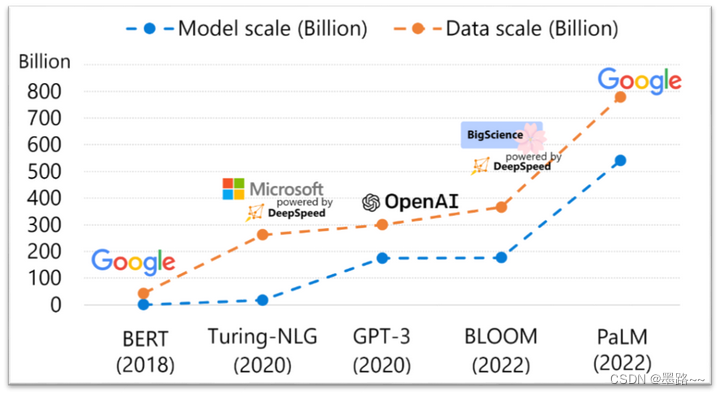

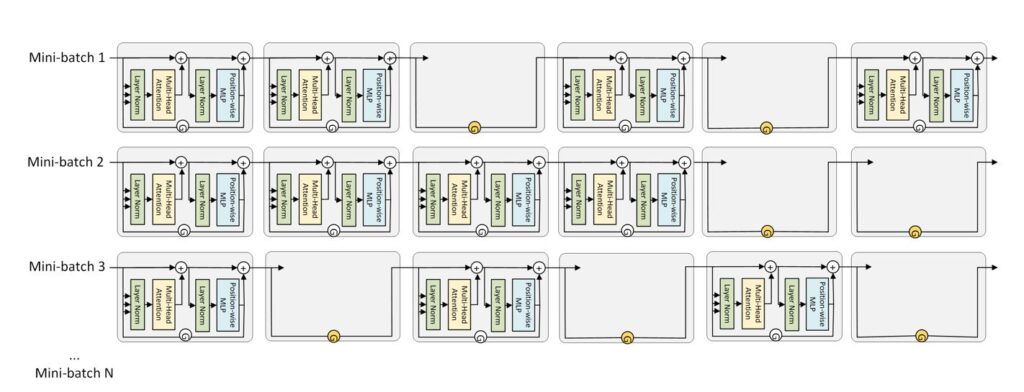

Large-scale models are revolutionizing deep learning and AI research, driving major improvements in language understanding, generating creative texts, multi-lingual translation and many more. But despite their remarkable capabilities, the models’ large size creates latency and cost constraints that hinder the deployment of applications on top of them. In particular, increased inference time and memory consumption […]

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

Microsoft's Open Sourced a New Library for Extreme Compression of Deep Learning Models, by Jesus Rodriguez

Jeff Rasley - CatalyzeX

DeepSpeed介绍_deepseed zero-CSDN博客

This AI newsletter is all you need #6 – Towards AI

Latest News - DeepSpeed

PDF] DeepSpeed- Inference: Enabling Efficient Inference of Transformer Models at Unprecedented Scale

This AI newsletter is all you need #6, by Towards AI Editorial Team

GitHub - microsoft/DeepSpeed: DeepSpeed is a deep learning optimization library that makes distributed training and inference easy, efficient, and effective.

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

如何评价微软开源的分布式训练框架deepspeed? - 知乎

- Women's Breathable Thin Double Breasted Sexy and Comfortable Bra Without Steel Ring Bra 36 C (Beige, 34) at Women's Clothing store

- White Irish Dance Socks, Shop Online

- aluminum air tank,Seamless process,polished/Special for air suspension system/Pneumatic accessories

- Waist Trainer Corset for weight loss - Classic

- Inspired by 90s Basketball, The warm up Jersey and Tearaway